In this blog post we walk through the steps taken to troubleshoot a degraded Azure Traffic Manager Endpoint.

Recently, I finished a project for a customer to build a Windows 10 Always On VPN solution. Due to the vast amount of users that would be connecting to this solution (1500+) they wanted some form of public load balancing which would distribute traffic equally to 2 on-premise Remote Access Severs (RAS) boxes.

Azure Traffic Manager was the chosen solution to achieve this, which is a DNS based Public load balancer. I'm not going to deep dive the specifics, however, if you wish to know more see Microsoft's official documentation below:

Azure Traffic Manager | Microsoft Docs

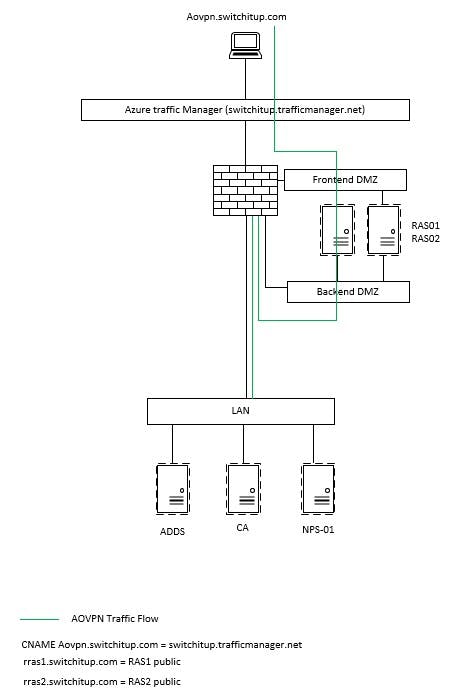

The below Visio shows the overall architecture of the always on VPN setup.The RAS boxes in question are located on a DMZ for security purposes. Their external IPs are registered in DNS as rras1.switchitup.com and rras2.switchitup.com, these records then being used by Azure Traffic Manager to distribute the traffic loads equally to both servers on-premise.

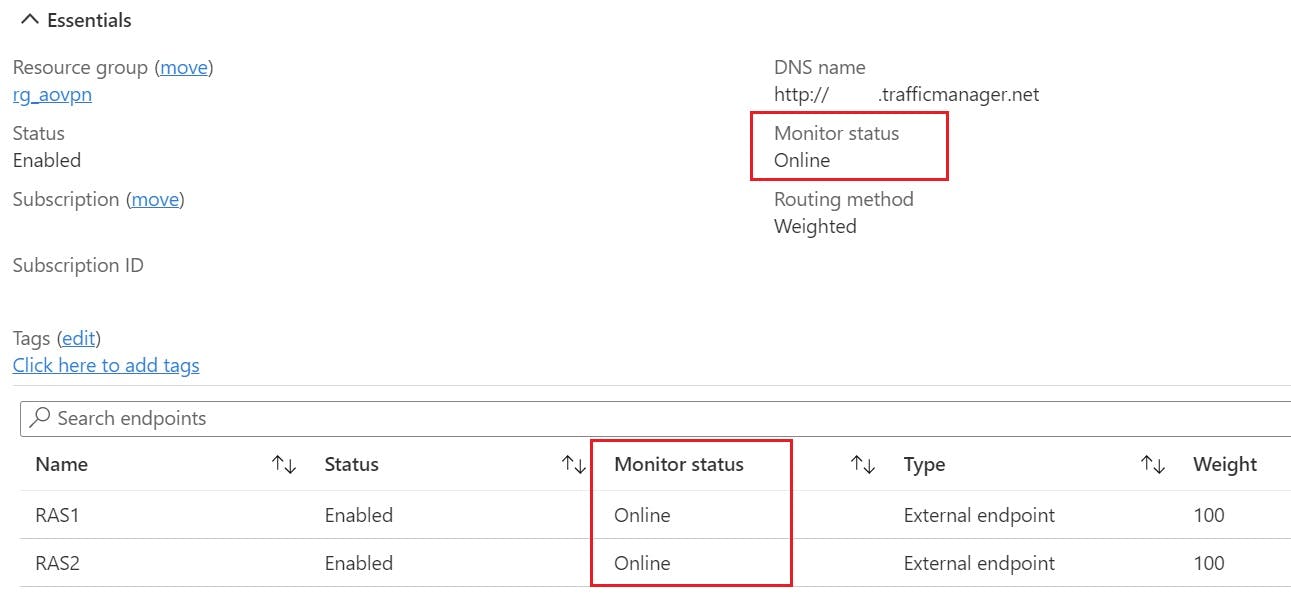

Azure Traffic Manager Setup overview

Here is a brief overview of the Traffic Manager settings:

The Problem?

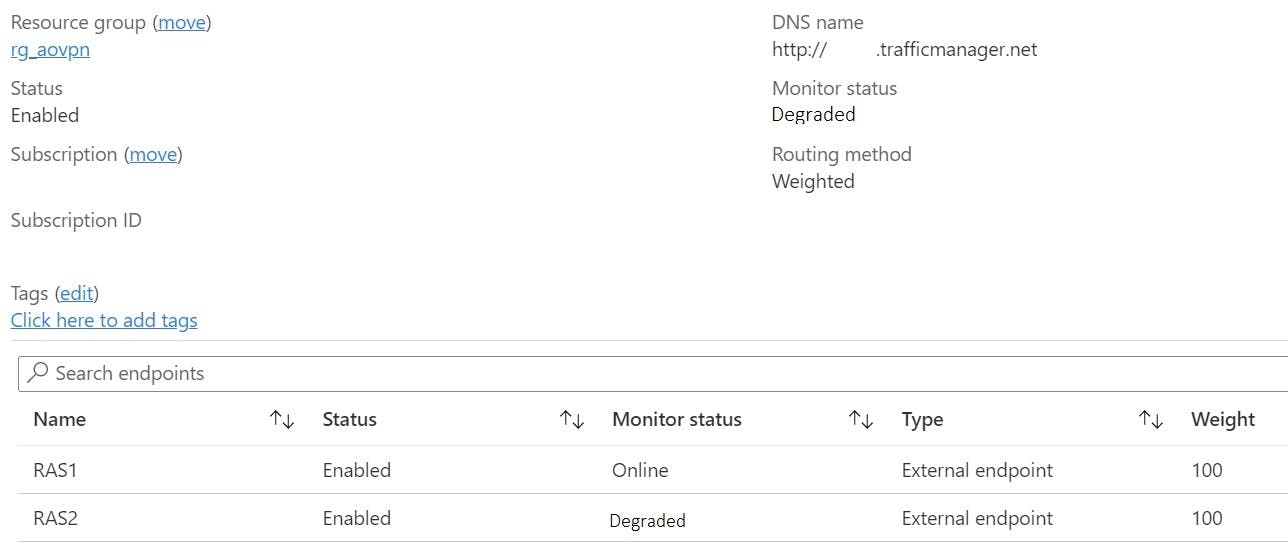

All was working when initially setup, however suddenly the RAS2 endpoint was constantly displaying a "Degraded" State.

A degraded endpoint won't receive traffic, it will be routed to any other "healthy" endpoints. Exception to this is when all endpoints are degraded, then traffic will be routed to any endpoint on best effort.

Microsoft's troubleshooting documentation goes deeper into explaining how to probe endpoints for a certain HTTP response, more specifically a response of 200 means the endpoint should be healthy.

Troubleshooting degraded status on Azure Traffic Manager | Microsoft Docs

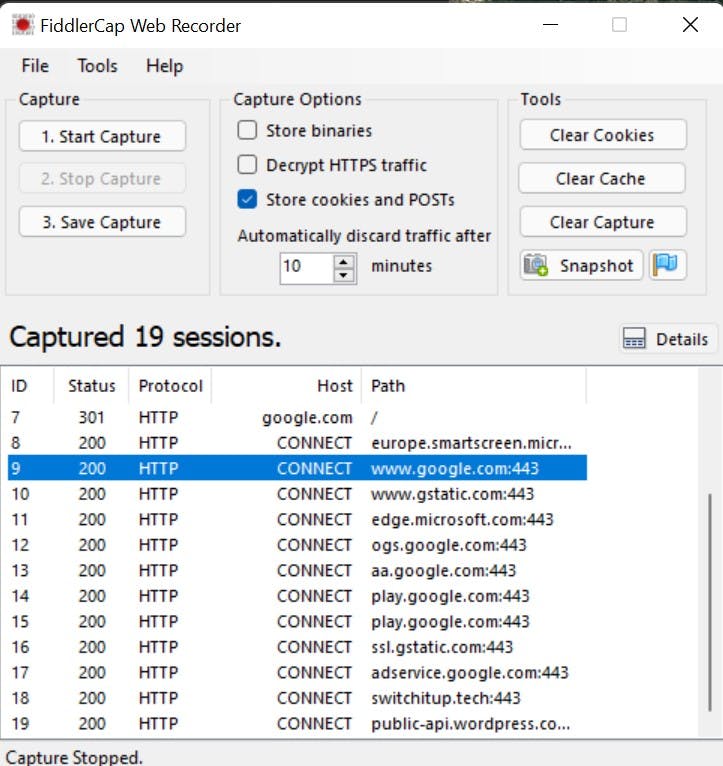

Enter FiddlerCap Web Recorder, a basic program used to probe websites for HTTP response, the picture below shows an example of a capture made when going to google.com, notice the healthy response of 200.

Probing the RAS boxes on their published external DNS names gives the following results, RAS1 = 200 but nothing for RAS2, which could mean a number of things:

- Botched configuration on RAS2

- RAS2 is not published correctly through the onsite firewall for 80/443

- A miss-configured Endpoint in Azure Traffic Manager

- Something Else

Troubleshooting the Azure Traffic Manager

- Verified the FQDN of the RAS2 endpoint matched what was published for in DNS = YES

- Checked the external Firewall rules matched RAS1 = YES

- Confirmed the Configuration on RAS2 matched RAS1 = YES

- Verified all Boxes had relevant Certificates with subject names = YES

- Tested without Windows firewall on = NO CHANGE.

The Solution

Slightly perplexed with the situation, as everything configuration wise was matching like-for-like, with some further investigation the issue actually lay with the RAS boxes themselves more specifically with RAS2.

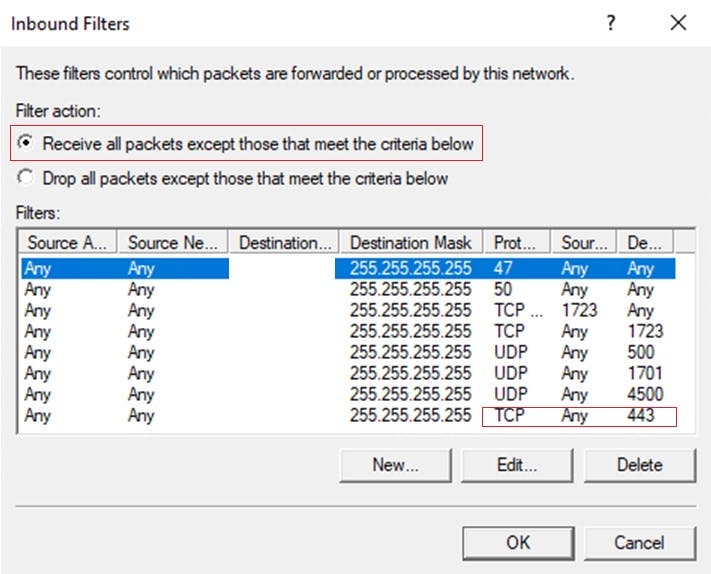

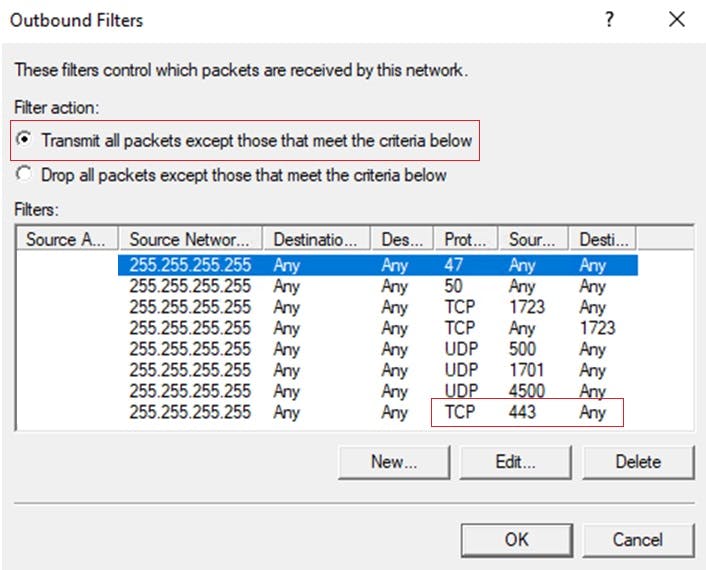

Somehow, Inbound and Outbound filters were applied to the RAS2 Remote Access settings and as a result were blocking 443 traffic to the box.

On removing these filters and refreshing Azure Traffic Manager everything was finally back to a healthy state.